-->Inheritance

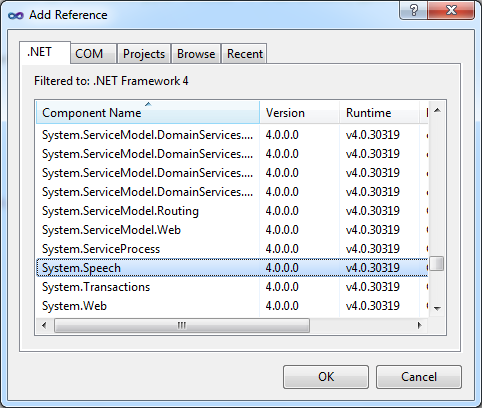

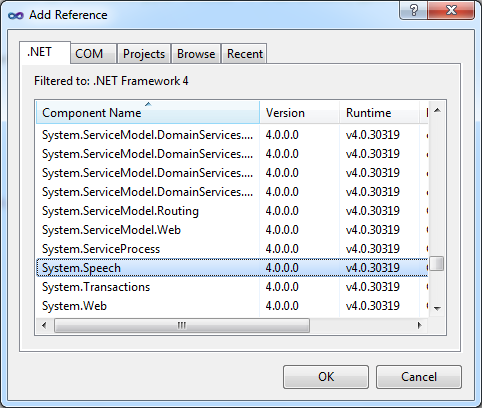

I've looked at other questions for this, and none of the answers have helped me. The problem seems to be that I can't get mex to recognize my compilers which are clearly installed on my computer. Any help would be amazing, I can't seem to figure this out. The following example is part of a console application that loads a speech recognition grammar and demonstrates asynchronous emulated input, the.

Definition

Provides access to the shared speech recognition service available on the Windows desktop.

SpeechRecognizer

- Implements

Examples

The following example is part of a console application that loads a speech recognition grammar and demonstrates asynchronous emulated input, the associated recognition results, and the associated events raised by the speech recognizer. If Windows Speech Recognition is not running, then starting this application will also start Windows Speech Recognition. If Windows Speech Recognition is in the Sleeping state, then EmulateRecognizeAsync always returns null.

Remarks

Applications use the shared recognizer to access Windows Speech Recognition. Use the SpeechRecognizer object to add to the Windows speech user experience.

This class provides control over various aspects of the speech recognition process:

- To manage speech recognition grammars, use the LoadGrammar, LoadGrammarAsync, UnloadGrammar, UnloadAllGrammars, and Grammars.

- To get information about current speech recognition operations, subscribe to the SpeechRecognizer's SpeechDetected, SpeechHypothesized, SpeechRecognitionRejected, and SpeechRecognized events.

- To view or modify the number of alternate results the recognizer returns, use the MaxAlternates property. The recognizer returns recognition results in a RecognitionResult object.

- To access or monitor the state of the shared recognizer, use the AudioLevel, AudioPosition, AudioState, Enabled, PauseRecognizerOnRecognition, RecognizerAudioPosition, and State properties and the AudioLevelUpdated, AudioSignalProblemOccurred, AudioStateChanged, and StateChanged events.

- To synchronize changes to the recognizer, use the RequestRecognizerUpdate method. The shared recognizer uses more than one thread to perform tasks.

- To emulate input to the shared recognizer, use the EmulateRecognize and EmulateRecognizeAsync methods.

The configuration of Windows Speech Recognition is managed by the use of the Speech Properties dialog in the Control Panel. This interface is used to select the default desktop speech recognition engine and language, the audio input device, and the sleep behavior of speech recognition. If the configuration of Windows Speech Recognition is changed while the application is running, (for instance, if speech recognition is disabled or the input language is changed), the change affects all SpeechRecognizer objects.

To create an in-process speech recognizer that is independent of Windows Speech Recognition, use the SpeechRecognitionEngine class.

Note

Always call Dispose before you release your last reference to the speech recognizer. Otherwise, the resources it is using will not be freed until the garbage collector calls the recognizer object's

Finalize method.Constructors

| SpeechRecognizer() | Initializes a new instance of the SpeechRecognizer class. |

Properties

| AudioFormat | Gets the format of the audio being received by the speech recognizer. |

| AudioLevel | Gets the level of the audio being received by the speech recognizer. |

| AudioPosition | Gets the current location in the audio stream being generated by the device that is providing input to the speech recognizer. |

| AudioState | Gets the state of the audio being received by the speech recognizer. |

| Enabled | Gets or sets a value that indicates whether this SpeechRecognizer object is ready to process speech. |

| Grammars | Gets a collection of the Grammar objects that are loaded in this SpeechRecognizer instance. |

| MaxAlternates | Gets or sets the maximum number of alternate recognition results that the shared recognizer returns for each recognition operation. |

| PauseRecognizerOnRecognition | Gets or sets a value that indicates whether the shared recognizer pauses recognition operations while an application is handling a SpeechRecognized event. |

| RecognizerAudioPosition | Gets the current location of the recognizer in the audio input that it is processing. |

| RecognizerInfo | Gets information about the shared speech recognizer. |

| State | Gets the state of a SpeechRecognizer object. |

Methods

| Dispose() | Disposes the SpeechRecognizer object. |

| Dispose(Boolean) | Disposes the SpeechRecognizer object and releases resources used during the session. |

| EmulateRecognize(RecognizedWordUnit[], CompareOptions) | Emulates input of specific words to the shared speech recognizer, using text instead of audio for synchronous speech recognition, and specifies how the recognizer handles Unicode comparison between the words and the loaded speech recognition grammars. |

| EmulateRecognize(String) | Emulates input of a phrase to the shared speech recognizer, using text instead of audio for synchronous speech recognition. |

| EmulateRecognize(String, CompareOptions) | Emulates input of a phrase to the shared speech recognizer, using text instead of audio for synchronous speech recognition, and specifies how the recognizer handles Unicode comparison between the phrase and the loaded speech recognition grammars. |

| EmulateRecognizeAsync(RecognizedWordUnit[], CompareOptions) | Emulates input of specific words to the shared speech recognizer, using text instead of audio for asynchronous speech recognition, and specifies how the recognizer handles Unicode comparison between the words and the loaded speech recognition grammars. |

| EmulateRecognizeAsync(String) | Emulates input of a phrase to the shared speech recognizer, using text instead of audio for asynchronous speech recognition. |

| EmulateRecognizeAsync(String, CompareOptions) | Emulates input of a phrase to the shared speech recognizer, using text instead of audio for asynchronous speech recognition, and specifies how the recognizer handles Unicode comparison between the phrase and the loaded speech recognition grammars. |

| Equals(Object) | Determines whether the specified object is equal to the current object. (Inherited from Object) |

| GetHashCode() | Serves as the default hash function. (Inherited from Object) |

| GetType() | Gets the Type of the current instance. (Inherited from Object) |

| LoadGrammar(Grammar) | Loads a speech recognition grammar. |

| LoadGrammarAsync(Grammar) | Asynchronously loads a speech recognition grammar. |

| MemberwiseClone() | Creates a shallow copy of the current Object. (Inherited from Object) |

| RequestRecognizerUpdate() | Requests that the shared recognizer pause and update its state. |

| RequestRecognizerUpdate(Object) | Requests that the shared recognizer pause and update its state and provides a user token for the associated event. |

| RequestRecognizerUpdate(Object, TimeSpan) | Requests that the shared recognizer pause and update its state and provides an offset and a user token for the associated event. |

| ToString() | Returns a string that represents the current object. (Inherited from Object) |

| UnloadAllGrammars() | Unloads all speech recognition grammars from the shared recognizer. |

| UnloadGrammar(Grammar) | Unloads a specified speech recognition grammar from the shared recognizer. |

Events

| AudioLevelUpdated | Occurs when the shared recognizer reports the level of its audio input. |

| AudioSignalProblemOccurred | Occurs when the recognizer encounters a problem in the audio signal. |

| AudioStateChanged | Occurs when the state changes in the audio being received by the recognizer. |

| EmulateRecognizeCompleted | Occurs when the shared recognizer finalizes an asynchronous recognition operation for emulated input. |

| LoadGrammarCompleted | Occurs when the recognizer finishes the asynchronous loading of a speech recognition grammar. |

| RecognizerUpdateReached | Occurs when the recognizer pauses to synchronize recognition and other operations. |

| SpeechDetected | Occurs when the recognizer detects input that it can identify as speech. |

| SpeechHypothesized | Occurs when the recognizer has recognized a word or words that may be a component of multiple complete phrases in a grammar. |

| SpeechRecognitionRejected | Occurs when the recognizer receives input that does not match any of the speech recognition grammars it has loaded. |

| SpeechRecognized | Occurs when the recognizer receives input that matches one of its speech recognition grammars. |

| StateChanged | Occurs when the running state of the Windows Desktop Speech Technology recognition engine changes. |

Applies to

See also

-->Enables speech recognition for command and control within Windows Runtime app.

Classes

| SpeechContinuousRecognitionCompletedEventArgsSpeechContinuousRecognitionCompletedEventArgsSpeechContinuousRecognitionCompletedEventArgsSpeechContinuousRecognitionCompletedEventArgsSpeechContinuousRecognitionCompletedEventArgs | Contains continuous recognition event data for the SpeechContinuousRecognitionSession.Completed event. |

| SpeechContinuousRecognitionResultGeneratedEventArgsSpeechContinuousRecognitionResultGeneratedEventArgsSpeechContinuousRecognitionResultGeneratedEventArgsSpeechContinuousRecognitionResultGeneratedEventArgsSpeechContinuousRecognitionResultGeneratedEventArgs | Contains event data for the SpeechContinuousRecognitionSession.ResultGenerated event. |

| SpeechContinuousRecognitionSessionSpeechContinuousRecognitionSessionSpeechContinuousRecognitionSessionSpeechContinuousRecognitionSessionSpeechContinuousRecognitionSession | Manages speech input for free-form dictation, or an arbitrary sequence of words or phrases that are defined in a local grammar file constraint. |

| SpeechRecognitionCompilationResultSpeechRecognitionCompilationResultSpeechRecognitionCompilationResultSpeechRecognitionCompilationResultSpeechRecognitionCompilationResult | The result of compiling the constraints set for a SpeechRecognizer object. |

| SpeechRecognitionGrammarFileConstraintSpeechRecognitionGrammarFileConstraintSpeechRecognitionGrammarFileConstraintSpeechRecognitionGrammarFileConstraintSpeechRecognitionGrammarFileConstraint | A custom grammar constraint based on a list of words or phrases (defined in a Speech Recognition Grammar Specification (SRGS) file) that can be recognized by the SpeechRecognizer object. Note Speech recognition using a custom constraint is performed on the device. |

| SpeechRecognitionHypothesisSpeechRecognitionHypothesisSpeechRecognitionHypothesisSpeechRecognitionHypothesisSpeechRecognitionHypothesis | A recognition result fragment returned by the speech recognizer during an ongoing dictation session. The result fragment is useful for demonstrating that speech recognition is processing input during a lengthy dictation session. |

| SpeechRecognitionHypothesisGeneratedEventArgsSpeechRecognitionHypothesisGeneratedEventArgsSpeechRecognitionHypothesisGeneratedEventArgsSpeechRecognitionHypothesisGeneratedEventArgsSpeechRecognitionHypothesisGeneratedEventArgs | Contains event data for the SpeechRecognizer.HypothesisGenerated event. |

| SpeechRecognitionListConstraintSpeechRecognitionListConstraintSpeechRecognitionListConstraintSpeechRecognitionListConstraintSpeechRecognitionListConstraint | A custom grammar constraint based on a list of words or phrases that can be recognized by the SpeechRecognizer object. When initialized, this object is added to the Constraints collection. Note Speech recognition using a custom constraint is performed on the device. |

| SpeechRecognitionQualityDegradingEventArgsSpeechRecognitionQualityDegradingEventArgsSpeechRecognitionQualityDegradingEventArgsSpeechRecognitionQualityDegradingEventArgsSpeechRecognitionQualityDegradingEventArgs | Provides data for the SpeechRecognitionQualityDegradingEvent event. |

| SpeechRecognitionResultSpeechRecognitionResultSpeechRecognitionResultSpeechRecognitionResultSpeechRecognitionResult | The result of a speech recognition session. |

| SpeechRecognitionSemanticInterpretationSpeechRecognitionSemanticInterpretationSpeechRecognitionSemanticInterpretationSpeechRecognitionSemanticInterpretationSpeechRecognitionSemanticInterpretation | Represents the semantic properties of a recognized phrase in a Speech Recognition Grammar Specification (SRGS) grammar. |

| SpeechRecognitionTopicConstraintSpeechRecognitionTopicConstraintSpeechRecognitionTopicConstraintSpeechRecognitionTopicConstraintSpeechRecognitionTopicConstraint | A pre-defined grammar constraint (specifed by SpeechRecognitionScenario ) provided through a web service. |

| SpeechRecognitionVoiceCommandDefinitionConstraintSpeechRecognitionVoiceCommandDefinitionConstraintSpeechRecognitionVoiceCommandDefinitionConstraintSpeechRecognitionVoiceCommandDefinitionConstraintSpeechRecognitionVoiceCommandDefinitionConstraint | A constraint for a SpeechRecognizer object based on a file. |

| SpeechRecognizerSpeechRecognizerSpeechRecognizerSpeechRecognizerSpeechRecognizer | Enables speech recognition with either a default or a custom graphical user interface (GUI). |

| SpeechRecognizerStateChangedEventArgsSpeechRecognizerStateChangedEventArgsSpeechRecognizerStateChangedEventArgsSpeechRecognizerStateChangedEventArgsSpeechRecognizerStateChangedEventArgs | Provides data for the SpeechRecognizer.StateChangedEvent event. |

| SpeechRecognizerTimeoutsSpeechRecognizerTimeoutsSpeechRecognizerTimeoutsSpeechRecognizerTimeoutsSpeechRecognizerTimeouts | The timespan that a speech recognizer ignores silence or unrecognizable sounds (babble) and continues listening for speech input. |

| SpeechRecognizerUIOptionsSpeechRecognizerUIOptionsSpeechRecognizerUIOptionsSpeechRecognizerUIOptionsSpeechRecognizerUIOptions | Specifies the UI settings for the SpeechRecognizer.RecognizeWithUIAsync method. |

| VoiceCommandManagerVoiceCommandManagerVoiceCommandManagerVoiceCommandManagerVoiceCommandManager | Note VoiceCommandManager may be altered or unavailable for releases after Windows Phone 8.1. Instead, use Windows.ApplicationModel.VoiceCommands.VoiceCommandDefinitionManager. A static class that enables installing command sets from a Voice Command Definition (VCD) file, and accessing the installed command sets. |

| VoiceCommandSetVoiceCommandSetVoiceCommandSetVoiceCommandSetVoiceCommandSet | Note VoiceCommandSet may be altered or unavailable for releases after Windows Phone 8.1. Instead, use Windows.ApplicationModel.VoiceCommands.VoiceCommandDefinition. Enables operations on a specific installed command set. |

Interfaces

| ISpeechRecognitionConstraintISpeechRecognitionConstraintISpeechRecognitionConstraintISpeechRecognitionConstraintISpeechRecognitionConstraint | Represents a constraint for a SpeechRecognizer object. |

Enums

| SpeechContinuousRecognitionModeSpeechContinuousRecognitionModeSpeechContinuousRecognitionModeSpeechContinuousRecognitionModeSpeechContinuousRecognitionMode | Specifies the behavior of the speech recognizer during a continuous recognition session. |

| SpeechRecognitionAudioProblemSpeechRecognitionAudioProblemSpeechRecognitionAudioProblemSpeechRecognitionAudioProblemSpeechRecognitionAudioProblem | Specifies the type of audio problem detected. |

| SpeechRecognitionConfidenceSpeechRecognitionConfidenceSpeechRecognitionConfidenceSpeechRecognitionConfidenceSpeechRecognitionConfidence | Specifies confidence levels that indicate how accurately a spoken phrase was matched to a phrase in an active constraint. |

| SpeechRecognitionConstraintProbabilitySpeechRecognitionConstraintProbabilitySpeechRecognitionConstraintProbabilitySpeechRecognitionConstraintProbabilitySpeechRecognitionConstraintProbability | Specifies the weighted value of a constraint for speech recognition. |

| SpeechRecognitionConstraintTypeSpeechRecognitionConstraintTypeSpeechRecognitionConstraintTypeSpeechRecognitionConstraintTypeSpeechRecognitionConstraintType | Specifies the grammar definition constraint used for speech recognition. |

| SpeechRecognitionResultStatusSpeechRecognitionResultStatusSpeechRecognitionResultStatusSpeechRecognitionResultStatusSpeechRecognitionResultStatus | Specifies the possible result states of a speech recognition session or from the compiling of grammar constraints. |

| SpeechRecognitionScenarioSpeechRecognitionScenarioSpeechRecognitionScenarioSpeechRecognitionScenarioSpeechRecognitionScenario | Specifies the scenario used to optimize speech recognition for a web-service constraint (created through a SpeechRecognitionTopicConstraint object). |

| SpeechRecognizerStateSpeechRecognizerStateSpeechRecognizerStateSpeechRecognizerStateSpeechRecognizerState | Specifies the state of the speech recognizer. |

Remarks

To use web-service constraints, speech input and dictation support must be enabled in Settings by turning on the 'Get to know me' option in the Settings -> Privacy -> Speech, inking, and typing page. See 'Recognize speech input' in Speech recognition.